Jeremy Schneider

Paranoid SQL Execution on Postgres

Suppose that you want to be completely over-the-top paranoid about making sure that when you execute some particular SQL statement on your Postgres database, you’re doing it in the safest and least risky way?

For example, suppose it’s the production database behind your successful startup’s main commercial website. If anything even causes queries to block/pause for a few minutes then people will quickly be tweeting about how they can’t place orders and it hurt both your company’s revenue and reputation.

You know that it’s really important to save regular snapshots and keep a history of important metrics. You’re writing some of your own code to capture a few specific stats like the physical size of your most important application tables or maybe the number of outstanding orders over time. Maybe you’re writing some java code that gets scheduled by quartz, or maybe some python code that you’ll run with cron.

Or another situation might be that you’re planning to make an update to your schema – adding a new table, adding a new column to an existing table, modifying a constraint, etc. You plan to execute this change as an online operation during the weekly period of lowest activity on the system – maybe it’s very late Monday night, if you’re in an industry that’s busiest over weekends.

How can you make sure that your SQL is executed on the database in the safest possible way?

Here are a few ideas I’ve come up with:

- Setting

connect_timeoutto something short, for example 2 seconds. - Setting

lock_timeoutto something appropriate. For example, 2ms on queries that shouldn’t be doing any locking. (I’ve seen entire systems brown-out because a “quick” DDL had to get in line behind an app transaction, and then all the new app transactions piled up behind the DDL that was waiting!) - Setting

statement_timeoutto something reasonable for the query you’re running – thus putting an upper bound on execution time. - Using an appropriate client-side timeout, for cases when the server fails to kill the query using

statement_timeout. For example, in Java the Statement class has native support for this. - When writing SQL, fully qualify names of tables and functions with the schema/namespace. (This can be a security feature; I have heard of attacks where someone manages to change the search_path for connections.)

- Check at least one explain plan and make sure it’s doing what you would expect it to be doing, and that it seems likely to be the most efficient way to get the information you need.

- Don’t use system views that join in unneeded data sources; go direct to needed raw relation or a raw function.

- Access each data source exactly once, never more than once. In that single pass, get all data that will be needed. Analytic or window functions are very useful for avoiding self-joins.

- Restrict the user to minimum needed privileges. For example, the

pg_read_all_statsrole on an otherwise unprivileged user might be useful. - Make sure your code has back-off logic for retries when failures or unexpected results are encountered.

- Prevent connection pile-ups resulting from database slowdowns or hangs. For example, by using a dedicated client-side connection pool with dynamic sizing entirely disabled or with a small max pool size.

- Run the query against a physical replica/hot standby (e.g. pulling a metric for the physical size of important tables) or logical copy (e.g. any query against application data), instead of running the query against the primary production database. (However, note that when

hot_standby_feedbackis enabled, long-running transactions on the PostgreSQL hot standby can still impact the primary system.) - For all DDL, carefully check the level of locking that it will require and test to get a feel for possible execution time. Watch out for table rewrites. Many DDLs that used to require a rewrite no longer do in current versions of PostgreSQL, but there are still a few out there. ALTER TABLE statements must be evaluated very carefully. Frankly ALTER TABLE is a bit notorious for being unclear about which incantations cause table rewrites and which ones don’t. (I have a friend who just tests every specific ALTER TABLE operation first on an empty table and then checks if pg_class changes show that a rewrite happened.)

What am I missing? What other ideas are out there for executing SQL in Postgres with a “paranoid” level of safety?

Note: see also Column And Table Redefinition With Minimal Locking

PostgreSQL Invalid Page and Checksum Verification Failed

At the Seattle PostgreSQL User Group meetup this past Tuesday, we got onto the topic of invalid pages in PostgreSQL. It was a fun discussion and it made me realize that it’d be worth writing down a bunch of the stuff we talked about – it might be interesting to a few more people too!

Invalid Page In BlockYou see an error message that looks like this:

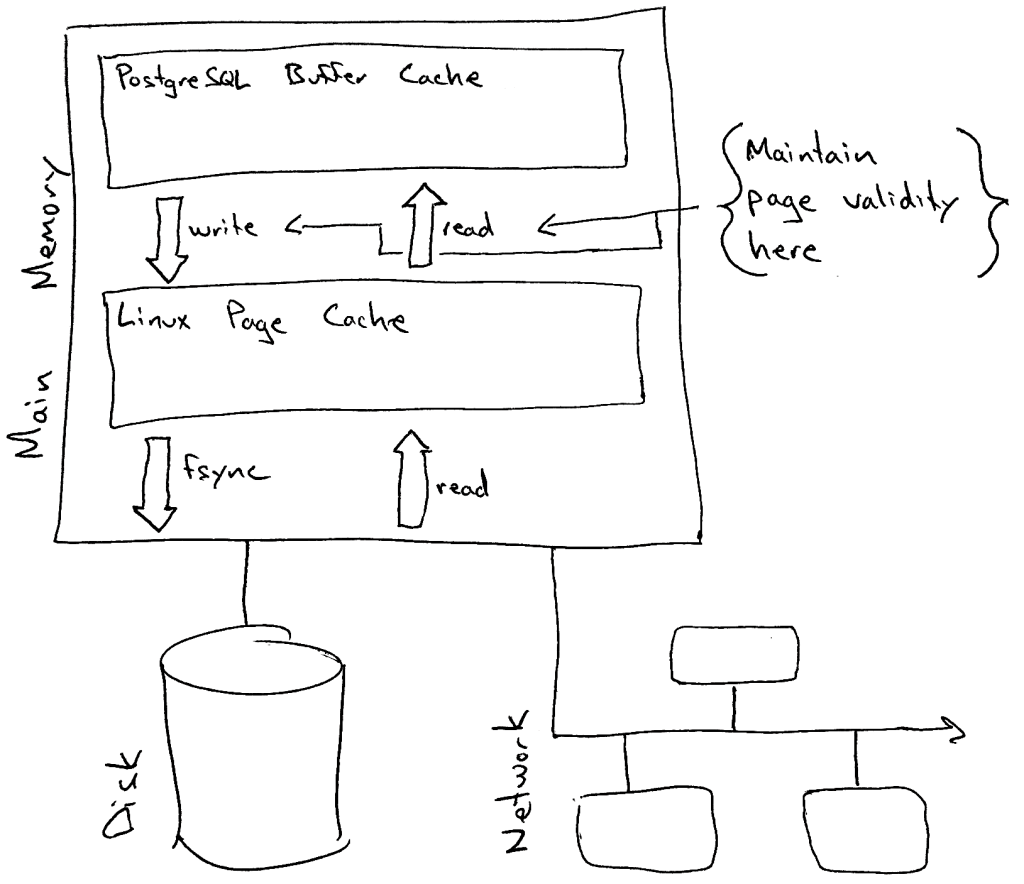

ERROR: invalid page in block 1226710 of relation base/16750/27244First and foremost – what does this error mean? I like to think of PostgreSQL as having a fairly strong “boundary” between (1) the database itself and (2) the operating system [and by extension everything else… firmware, disks, network, remote storage, etc]. PostgreSQL maintains page validity primarily on the way in and out of its buffer cache.

What does this mean in practice? Suppose there’s a physical memory failure and somehow the ECC parity is unable to detect it. This means that a little bit of physical memory on the server now has incorrect garbage and the correct data from that memory is lost.

- If the garbage bytes map to part of the kernel page cache, then when PostgreSQL tries to copy the page into it’s buffer cache then it will (if possible) detect that something is wrong, refuse to poison its buffer cache with this invalid 8k page, and error out any queries that require this page for processing with the ERROR message above.

- If the garbage bytes map to part of PostgreSQL’s database buffer cache, then PostgreSQL will quite happily assume nothing is wrong and attempt to process the data on the page. Results are unpredictable; probably all sorts of ERROR messages, crashes and failure modes could result – or maybe even incorrect data returned with no ERROR message at all. (Note that this is probably the case for nearly all software… and also note that ECC is pretty good.)

PostgreSQL has two main “validity checks” that it performs on pages. You can read the code in the function PageIsVerified() but I’ll summarize here. You can tell from your error message which validity check failed. It depends on whether you see a second additional WARNING right before the ERROR. The warning would look like this:

WARNING: page verification failed, calculated checksum 3482 but expected 32232- If the above warning is not present, this means the page header failed a basic sanity check. This could conceivably be caused by both problems inside and outside of PostgreSQL.

- If you see the above warning (page verification failed), this means the checksum recorded in the block did not match the checksum calculated for the block. This most likely indicates that there was a problem outside of (underneath) the database – operating system, memory, networking, storage, etc.

As of when I’m writing this article in 2019, the following basic sanity checks are performed on the page header:

- There are 32 bits reserved for page flag bits; at present only three are used and the other 29 bits should always be zero/off.

- Every page is divided into four parts (header, free space, tuples, special space). Offsets for the divisions are stored as 16-bit numbers in the page header; the offsets should go in order and should not have a value pointing off the page.

- The offset of the special space should always be aligned.

PostgreSQL version 9.3 (released in 2013) added the ability to calculate a checksum on data pages and store the checksum in the page. There are two inputs to the checksum: (1) every single byte of the data page, with zeros in the four bytes where the checksum will be stored later and (2) the page offset/address. This means that PostgreSQL doesn’t just detect if a byte is changed in the page – it also detects if a perfectly valid page gets somehow dropped into the wrong place.

Checksums are not maintained for blocks while they are in the shared buffers – so if you look at a buffer in the PostgreSQL page cache with pageinspect and you see a checksum value, note that it’s probably just leftover from the last read but wouldn’t have been maintained when the page was changed. The checksum is calculated and stamped onto the page when the page is written out of the buffer cache into the operating system page cache (remember the pages get flushed to disk later by a separate fsync call).

The checksum algorithm is specifically designed to take advantage of SIMD instructions. The slowest part of the algorithm is multiplication, so if possible PostgreSQL will be compiled to perform 32 multiplications at a time in parallel. In 2013 there were two platforms specifically documented to support this: x86 SSE4.1 and ARM NEON. The implementation is specifically tuned for optimal performance on x86 SSE. This is an important point actually – particularly for folks running PostgreSQL on embedded devices – PostgreSQL certainly compiles and works well on a lot of platforms, but evidently the checksum implementation is explicitly optimized to run the fastest on Intel. (To be clear… I think everyone should use checksums on every platform unless you have a really really good reason not to – just don’t be surprised if you start comparing benchmarks with Intel and you see a difference!)

For folks interested in digging a little more into the history… here’s the original commit using a CRC algorithm which never shipped in an actual PostgreSQL release (Simon Riggs, Jeff Davis and Greg Smith), here’s the subsequent commit introducing the FNV-1a algorithm instead of CRC which is what we still use today (Ants Aasma), and here’s the commit doing the major refactor which moved the algorithm into a header file for easier external use (Tom Lane).

More Ways To Check ValidityAt the SEAPUG meetup this led to a discussion about checking validity. Do checksums cover everything? (hint: no) Are there more ways we can validate our databases? (hint: yes)

I haven’t yet made a full list of which files are covered by checksums and which ones aren’t, but I know that not everything is. For example: I’m pretty sure that the visiblity map and SLRU files aren’t covered with checksums. But for what it’s worth, there are two extra tools we can use verification in PostgreSQL databases:

- The amcheck extension can scan a B-Tree index for a number of logical problems – for example, verifying that all B-Tree pages have items in “logical” order. (This could be useful, for example, if you’re not specifying ICU collation and you recently upgraded your operating system collation libraries… since PostgreSQL uses OS collation by default.)

- The pg_visibility_map extension includes two functions to check for corruption in the visibility map – pg_check_frozen() and pg_check_visible().

Finally, what if you actually run into a checksum failure? What should you do, and are there any additional tools you should know about?

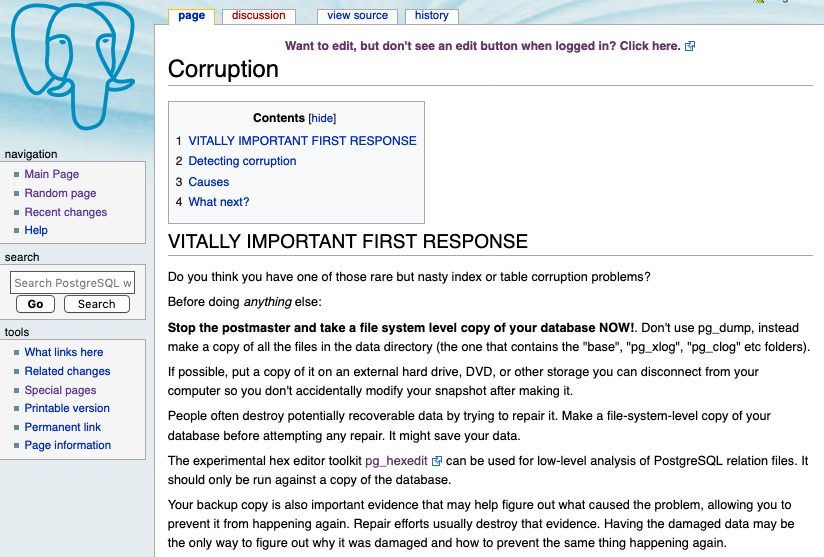

First of all – on any database – there are a few things you should always do immediately when you see something indicating that a data corruption may have occurred:

- Verify that your backup retention and your log file retention are sufficiently long – I recommend at least a month (this is a Happiness Hint). You never know how long the investigation might take, or how long ago something important might have happened.

- Take a moment to articulate and write out the impact to the business. (Are important queries failing right now? Is this causing an application outage?) This seems small but it can be very useful in making decisions later. Don’t exaggerate the impact but don’t understate it either. It can also be helpful to note important timelines that you already know about. For example: management is willing to use yesterday’s backup and take a day of data loss to avoid an 12 hour outage, but not to avoid a 4 hour outage …or… management needs a status update at 11:00am Eastern Time.

- If there’s a larger team working on the system in question, communicate a freeze on changes until things are resolved.

- Make a list or inventory of all copies of the data. Backups, physical replicas or hot standbys, logical replicas, copies, etc. Sometimes the very process of making this list can immediately give you ideas for simple solutions (like checking if a hot standby has the block intact). The next thing you probably want to do is check all items in this list to see if they have a valid copy of the data in question. Do not take any actions to remediate the problem right away, collect all of the information first. The data you collect now might useful during RCA investigation after you’re back online.

- If there was one checksum failure, then you probably want to make sure there aren’t more.

- If it’s a small database, consider whether you can scan the whole thing and verify the checksum on every single block.

- If it’s a large database, consider whether you can at least scan all of the system/catalog tables and perhaps scan the tables which are throwing errors in their entirety. (PostgreSQL stops on the first error, so there isn’t an easy way to check if other blocks in the same table also have checksum problems.)

- A few general best practices… (1) have a second person glance at your screen before you execute any actual changes, (2) never delete anything but always rename/move instead, (3) when investigating individual blocks, also look at the block before and the block after to verify they look like what you’d normally expect, (4) test the remediation plan before running it in production, and (5) document everything. If you’ve never seen Paul Vallée’s FIT-ACER checklist then it’s worth reviewing.

There’s no single prescriptive process for diagnosing the scope of the problem and finding the right path forward for you. It involves learning what you need to know about PostgreSQL, a little creative thinking about possible resolutions, and balancing the needs of the business (for example, how long can you be down and how much data loss can you tolerate).

That being said, there are a few tools I know about which can be very useful in the process. (And there are probably more that I don’t know about; please let me know and I can add them to this list!)

Data investigation:Unix/Linux CommandsYou might be surprised at what you can do with the tools already installed on your operating system. I’ve never been on a unix system that didn’t have dd and od installed, and I find that many Linux systems have hexdump and md5sum installed as well. A few examples of how useful these tools are: dd can extract the individual block with invalid data on the primary server and extract the same block on the hot standby, then od/hexdump can be used to create a human-readable textual dump of the binary data. You can even use diff to find the differences between the blocks. If you have a standby cluster with storage-level replication then you could use md5sum to see at a glance if the blocks match. (Quick word of caution on comparing hot standbys: last I checked, PostgreSQL doesn’t seem to maintain the free space identically on hot standbys, so the checksums might differ on perfectly healthy blocks. You can still look at the diff and verify whether free space is the only difference.) Drawbacks: low-level utilities can only do binary dumps but cannot interpret the data. Also, utilities like dd are “sharp knives” – powerful tools which can cause damage if misused!

For a great example of using dd and od, read the code in Bertrand Drouvot‘s pg_toolkit script collection.Data investigation and checksum verification:

pg_filedumpThis is a crazy awesome utility and I have no idea why it’s not in core PostgreSQL. It makes an easy-to-read textual dump of the binary contents of PostgreSQL data blocks. You can process a whole file or just specify a range of blocks to dump. It can verify checksums and it can even decode the contents of the tuples. As far as I can tell, it was originally written by Patrick Macdonald at Red Hat some time in the 2000’s and then turned over to the PostgreSQL Global Development Group for stewardship around 2011. It’s now in a dedicated repository at git.postgresql.org and it seems that Tom Lane, Christoph Berg and Teodor Sigaev keep it alive but don’t invest heavily in it. Drawbacks: be aware that it doesn’t address the race condition with a running server (see Credativ pg_checksums below). For dumping only a block with a checksum problem, this is not an issue since the server won’t let the block into its buffer cache anyway.Checksum verification:

PostgreSQL pg_checksumsPostgreSQL itself starting in version 11 has a command-line utility to scan one relation or everything and verify the checksum on every single block. It’s called pg_verify_checksums in v11 and pg_checksums in v12. Drawbacks: first, this utility requires you to shut down the database before it will run. It will throw an error and refuse to run if the database is up. Second, you can scan a single relation but you can’t say which database it’s in… so if the OID exists in multiple databases, there’s no way to just scan the one you care about.Checksum verification:

Credativ pg_checksumsThe fine engineers of Credativ have published an enhanced version of pg_checksums which can verify checksums on a running database. It looks to me like the main case they needed to protect against was the race condition between pg_checksum reading blocks while the running PostgreSQL server was writing those same blocks. Linux of course work on a 4k page size; so if an 8k database page is half written when pg_checksum reads it then we will get a false positive. The version from credativ however is smart enough to deal with this. Drawbacks: check the github issues; there are a couple notable drawbacks but this project was only announced last week and all the drawbacks might be addressed by the time you read this article. Also, being based on the utility in PostgreSQL, the same limitation about scanning a single relation applies.

Note that both Credativ’s and PostgreSQL’s pg_checksums utilities access the control file, even when just verifying checksums. As a result, you need to make sure you compile against the same version of PostgreSQL code as the target database you’re scanning.Checksum verification:

Satoshi Nagayasu postgres-toolkitI’m not sure if this is still being maintained, but Satoshi Nagayasu wrote postgres-toolkit quite a long time ago which includes a checksum verification utility. It’s the oldest one I have seen so far – and it still compiles and works! (Though if you want to compile it on PostgreSQL 11 or newer then you need to use the patch in this pull request.) Satoshi’s utility also has the very useful capability of scanning an arbitrary file that you pass in – like pg_filedump but stripped down to just do the checksum verification. It’s clever enough to infer the segment number from the filename and scan the file, even if the file isn’t part of a PostgreSQL installation. This would be useful, for example, if you were on a backup server and wanted to extract a single file from your backup and check if the damaged block has valid checksum in the backup. Drawbacks: be aware that it doesn’t address the race condition with a running server.Checksum verification:

Google pg_page_verificationSimple program; you pass in a data directory and it will scan every file in the data directory to verify the checksums on all blocks. Published to Github in early 2018. Drawbacks: be aware that it doesn’t address the race condition with a running server. Probably superseded by the built-in PostgreSQL utilities.Mitigation:

PostgreSQL Developer OptionsPostgreSQL has hundreds of “parameters” – knobs and button you can use to configure how it runs. There are 294 entries in the pg_settings table on version 11. Buried in these parameters are a handful of “Developer Options” providing powerful (and dangerous) tools for mitigating data problems – such as ignore_checksum_failure, zero_damaged_pages and ignore_system_indexes. Read very carefully and exercise great care with these options – when not fully understood, they can have unexpected side effects including unintended data loss. Exercise particular care with the ignore_checksum_failure option – even if you set that in an individual session, the page will be readable to all connections… think of it as poisoning the buffer cache. That being said, sometimes an option like zero_damaged_pages is the fastest way to get back up and running. (Just make sure you’ve saved a copy of that block!) By the way… a trick to trigger a read of one specific block is to

SELECT * FROM table WHERE ctid='(blockno,1)'Mitigation:Unix/Linux CommandsI would discourage the use of dd to mitigate invalid data problems. It’s dangerous even for experienced engineers; simple mistakes can compound the problem. I can’t imagine a situation where this is a better approach than the zero_damaged_pages developer option and a query to read a specific block. That said, I have seen cases where dd was used to zero out a page. More About Data Investigation

In order to put some of this together, I’ll just do a quick example session. I’m running PostgreSQL 11.5 an on EC2 instance and I used dd to write a few evil bytes into a couple blocks of my database.

First, lets start by just capturing the information from the log files:

$ grep "invalid page" ../log/postgresql.log|sed 's/UTC.*ERROR//'

2019-10-15 19:53:37 : invalid page in block 0 of relation base/16385/16493

2019-10-16 22:26:30 : invalid page in block 394216 of relation base/16385/16502

2019-10-16 22:43:24 : invalid page in block 394216 of relation base/16385/16502

2019-11-05 23:55:33 : invalid page in block 394216 of relation base/16385/16502

2019-11-05 23:57:58 : invalid page in block 394216 of relation base/16385/16502

2019-11-05 23:59:14 : invalid page in block 262644 of relation base/16385/16502

2019-11-05 23:59:21 : invalid page in block 262644 of relation base/16385/16502

2019-11-05 23:59:22 : invalid page in block 262644 of relation base/16385/16502

2019-11-05 23:59:23 : invalid page in block 262644 of relation base/16385/16502

2019-11-06 00:01:12 : invalid page in block 262644 of relation base/16385/16502

2019-11-06 00:01:16 : invalid page in block 0 of relation base/16385/16493

2019-11-06 00:02:05 : invalid page in block 250 of relation base/16385/16492With a little command-line karate we can list each distinct block and see the first time we got an error on that block:

$ grep "invalid page" ../log/postgresql.log |

sed 's/UTC.*ERROR//' |

awk '{print $1" "$2" "$11" invalid_8k_block "$8" segment "int($8/131072)" offset "($8%131072)}' |

sort -k3,5 -k1,2 |

uniq -f2

2019-11-06 00:02:05 base/16385/16492 invalid_8k_block 250 segment 0 offset 250

2019-10-15 19:53:37 base/16385/16493 invalid_8k_block 0 segment 0 offset 0

2019-11-05 23:59:14 base/16385/16502 invalid_8k_block 262644 segment 2 offset 500

2019-10-16 22:26:30 base/16385/16502 invalid_8k_block 394216 segment 3 offset 1000 So we know that there are at least 4 blocks corrupt. Lets scan the whole data directory using Credativ’s pg_checksum (without shutting down the database) to see if there are any more blocks with bad checksums:

$ pg_checksums -D /var/lib/pgsql/11.5/data |& fold -s

pg_checksums: error: checksum verification failed in file

"/var/lib/pgsql/11.5/data/base/16385/16502.3", block 1000: calculated checksum

2ED4 but block contains 4EDF

pg_checksums: error: checksum verification failed in file

"/var/lib/pgsql/11.5/data/base/16385/16502.3", block 1010: calculated checksum

9ECF but block contains ACBE

pg_checksums: error: checksum verification failed in file

"/var/lib/pgsql/11.5/data/base/16385/16502.2", block 500: calculated checksum

5D6 but block contains E459

pg_checksums: error: checksum verification failed in file

"/var/lib/pgsql/11.5/data/base/16385/16493", block 0: calculated checksum E7E4

but block contains 78F9

pg_checksums: error: checksum verification failed in file

"/var/lib/pgsql/11.5/data/base/16385/16492", block 250: calculated checksum

44BA but block contains 3ABA

Checksum operation completed

Files scanned: 1551

Blocks scanned: 624158

Bad checksums: 5

Data checksum version: 1Ah-ha… there was one more bad checksum which didn’t show up in the logs! Next lets choose one of the bad blocks and dump the contents using unix command line tools.

$ dd status=none if=base/16385/16492 bs=8192 count=1 skip=250 | od -A d -t x1z -w16

0000000 00 00 00 00 e0 df 6b b0 ba 3a 04 00 0c 01 80 01 >......k..:......<

0000016 00 20 04 20 00 00 00 00 80 9f f2 00 00 9f f2 00 >. . ............<

0000032 80 9e f2 00 00 9e f2 00 80 9d f2 00 00 9d f2 00 >................<

0000048 80 9c f2 00 00 9c f2 00 80 9b f2 00 00 9b f2 00 >................<

0000064 80 9a f2 00 00 9a f2 00 80 99 f2 00 00 99 f2 00 >................<

0000080 80 98 f2 00 00 98 f2 00 80 97 f2 00 00 97 f2 00 >................<Here we see the page header and the beginning of the line pointers. One thing I think it’s easy to remember is that the first 8 bytes are the page LSN and the next 2 bytes are the page checksum. Notice that the page checksum bytes contain “ba 3a” which matches the error message from the scan above (3ABA). Sometimes it can be useful to know just the very top of the page even if you don’t remember anything else!

This is useful, but lets try the pg_filedump utility next. This utility takes a lot of options. In this example I’m going to ask it to verify the checksum (-k), only scan one block at offset 250 (-R 250 250) and even to decode the tuples (table row data) to a human-readable format (-D int,int,int,charN). There’s another argument (-f) that can even tell pg_filedump to show hexdump/od style raw data inline but I won’t demonstrate that here.

$ pg_filedump -k -R 250 250 -D int,int,int,charN base/16385/16492

*******************************************************************

* PostgreSQL File/Block Formatted Dump Utility - Version 11.0

*

* File: base/16385/16492

* Options used: -k -R 250 250 -D int,int,int,charN

*

* Dump created on: Fri Nov 8 21:48:38 2019

*******************************************************************

Block 250 ********************************************************

<Header> -----

Block Offset: 0x001f4000 Offsets: Lower 268 (0x010c)

Block: Size 8192 Version 4 Upper 384 (0x0180)

LSN: logid 0 recoff 0xb06bdfe0 Special 8192 (0x2000)

Items: 61 Free Space: 116

Checksum: 0x3aba Prune XID: 0x00000000 Flags: 0x0004 (ALL_VISIBLE)

Length (including item array): 268

Error: checksum failure: calculated 0x44ba.

<Data> ------

Item 1 -- Length: 121 Offset: 8064 (0x1f80) Flags: NORMAL

COPY: 15251 1 0

Item 2 -- Length: 121 Offset: 7936 (0x1f00) Flags: NORMAL

COPY: 15252 1 0

Item 3 -- Length: 121 Offset: 7808 (0x1e80) Flags: NORMAL

COPY: 15253 1 0That was the block header and the first few item. (Item pointer data is displayed first, then the table row data itself is displayed on the following line after the word COPY.) Looking down a little bit, we can even see where I wrote the bytes “budstuff” into a random location in this block – it turns out those bytes landed in the middle of a character field. This means that without checksums, PostgreSQL would not have thrown any errors at all but just returned an incorrect string the next time that row was queried!

COPY: 15297 1 0

Item 48 -- Length: 121 Offset: 2048 (0x0800) Flags: NORMAL

COPY: 15298 1 0

Item 49 -- Length: 121 Offset: 1920 (0x0780) Flags: NORMAL

COPY: 15299 1 0 badstuff

Item 50 -- Length: 121 Offset: 1792 (0x0700) Flags: NORMAL

COPY: 15300 1 0It’s immediately clear how useful this is (and easier to read <g>). The part where it decodes the table row data into a human readable form is an especially cool trick. Two notes about this.

- First, the lines are prefixed with the word COPY for a reason – they are actually intended to be formatted so you can grep on the word COPY and then use the “copy” command (or it’s psql cousin) to feed the data directly back into a staging table in the database for cleanup. How cool is that!

- Second, it can decode only a set of fairly standard data types and relation types.

$ pg_filedump -h

Version 11.0 (for PostgreSQL 11.x)

Copyright (c) 2002-2010 Red Hat, Inc.

Copyright (c) 2011-2018, PostgreSQL Global Development Group

Usage: pg_filedump [-abcdfhikxy] [-R startblock [endblock]]

[-D attrlist] [-S blocksize] [-s segsize] [-n segnumber] file

Display formatted contents of a PostgreSQL heap/index/control file

Defaults are: relative addressing, range of the entire file, block

size as listed on block 0 in the file

The following options are valid for heap and index files:

...

...

...

-D Decode tuples using given comma separated list of types

Supported types:

bigint bigserial bool char charN date float float4 float8 int

json macaddr name oid real serial smallint smallserial text

time timestamp timetz uuid varchar varcharN xid xml

~ ignores all attributes left in a tupleNow you see what happens when I start having fun… a quick update about our SEAPUG meetup this past Tuesday turned into a blog article that’s way too long. :) Hope it’s useful, and as always let me know what I can improve!

Update 9/16/21 – It was an oversight that I didn’t link it when I first published this post – but the PostgreSQL community wiki has a page on corruption. That page has a ton of good information and is definitely worth referencing.

Seattle PostgreSQL Meetup This Thursday: New Location

I’m looking forward to the Seattle PostgreSQL User Group meetup this Thursday (June 20, 2019) at 5:30pm! We’re going to get an early sneak peek at what’s coming later this year in PostgreSQL’s next major release. The current velocity of development in this open source community is staggering and this is an exciting and valuable opportunity to keep up with where PostgreSQL is going next.

One thing that’s a bit unusual about this meetup is the new location and late timing of the announcement. I think it’s worth a quick blog post to mention the location: for some people this new location might be a little more accessible than the normal spot (over at the Fred Hutch).

The meetup this week will be closer to downtown at 2201 6th Avenue (the building says “Blanchard Plaza” above the entrance): right next to the Spheres, easily accessible from public transportation and free parking across the street.

If you live or work in Seattle and you’re interested in databases but you don’t normally attend the Seattle PostgreSQL User Group, it’s worth checking if this location might be more convenient and make the visit worthwhile.

Bring any database question you have – there are people here who know PostgreSQL well enough to answer anything you can throw at them! Also, as always, some pizza and drinks will be provided. Hope to see you there!

PostgresConf 2019 Training Days

It feels like PostgresConf in New York is in full swing, even though the main tracks haven’t even started yet!

It feels like PostgresConf in New York is in full swing, even though the main tracks haven’t even started yet!

(Oh, and by the way, as of this morning I heard there are still day-passes available for those who haven’t yet registered for the conference… and then you can come hear a great session about Wait Events in PostgreSQL this Thursday at 4:20pm!)

The first two days of PostgresConf are summits, tutorials and training sessions. A good chunk of my day today was helping out with Scott Mead’s intensive 3 hour hands-on lab Setting up PostgreSQL for Production in AWS – but outside of that I’ve managed to drop in to a number of other sessions that sounded interesting. I did my best to take down some notes so I could share a few highlights.

Monday March 18Personally, my favorite session on Monday was Brent Bigonger’s session. He’s a database engineer at Amazon who was involved in migrating their Inventory Management System to Aurora PostgreSQL. I always love hearing good stories (part of why I’ve always been a fan of user groups) – this presentation gave a nice high level overview of the business, a review of the planning and execution process for the migration, and lots of practical lessons learned.

- Some of the tips were things people are generally familiar with – like NULLs behaving differently and the importance of performance management with a tool like Performance Insights.

- My favorite tip is getting better telemetry by instrumenting SQL with comments (SELECT /* my-service-call-1234 */ …) which reminded me of something I also read in Baron Schwartz’s recently updated e-book on observable systems: “including implicit data in SQL.”

- A great new tip (to me) was the idea of creating a heartbeat table as one more safety check in a replication process. You can get a sense for lag by querying the table and you can also use it during a cutover to get an extra degree of assurance that no data was missed.

- Another general point I really resonated with: Brent gave a nice reminder that a simple solution which meets the business requirements is better than a sophisticated or complex solution that goes beyond what the business really needs. I feel tempted on occasion to leverage architectures because they are interesting – and I always appreciate hearing this reiterated!

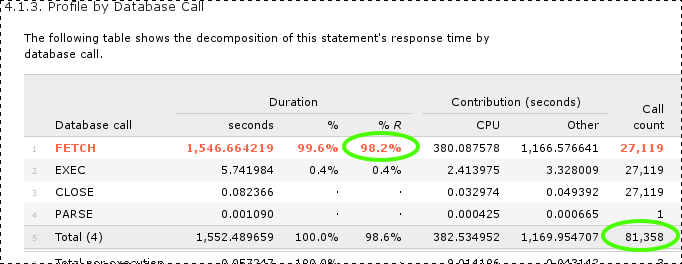

On the AWS track, aside from Brent’s session, I caught a few others: Jim Mlodgenski giving a deep dive on Aurora PostgreSQL architecture and Jim Finnerty giving a great talk on Aurora PostgreSQL performance tuning and query plan management. It’s funny, but I think my favorite slide from Finnerty’s talk was actually one of the simplest and most basic; he had a slide that just had high-level list of steps for performance tuning. I don’t remember the exact list on that slide at the moment, but the essential process: (1) identify to top SQL (2) EXPLAIN to get the plan (3) make improvements to the SQL and (4) test and verify whether the improvements actually had the intended effect.

Other sessions I dropped into:

- Alvaro Hernandez giving an Oracle to PostgreSQL Migration Tutorial. I love live demos (goes along with loving hands on labs) and so this session was a hit with me – I wasn’t able to catch the whole thing but I did catch a walk-through of ora2pg.

- Avinash Vallarapu giving an Introduction to PostgreSQL for Oracle and MySQL DBAs. When I slipped in, he was just wrapping up a section on hot physical backups in PostgreSQL with the pg_basebackup utility. After that, Avi launched into a section on MVCC in PostgreSQL – digging into transaction IDs and vacuum, illustrated with block dumps and the pageinspect extension. The part of this session I found most interesting was actually a few of the participant discussions – I heard lively discussions about what extensions are and about comparisons with RMAN and older versions of Oracle.

As I said before, a good chunk of my morning was in Scott’s hands-on lab. If you ever do a hands-on lab with Scott then you’d better look out… he did something clever there: somewhere toward the beginning, if you followed the instructions correctly, then you would be unable to connect to your database! Turns out this was on purpose (and the instructions actually tell you this) – since people often have this particular problem connecting when they first start on out RDS, Scott figured he’d just teach everyone how to fix it. I won’t tell you what the problem actually is though – you’ll have to sign up for a lab sometime and learn for yourself. :)

As always, we had a lot of really interesting discussions with participants in the hands-on lab. We talked about the DBA role and the shared responsibility model, about new tools used to administer RDS databases in lieu of shell access (like Performance Insights and Enhanced Monitoring), and about how RDS helps implement industry best practices like standardization and automation. On a more technical level, people were interested to learn about the “pgbench” tool provided with postgresql.

In addition to the lab, I also managed to catch part of Simon Riggs’ session Essential PostgreSQL11 Database Administration – in particular, the part about PostgreSQL 11 new features. One interesting new thing I learned was about some work done specifically around the performance of indexes on monotonically increasing keys.

Interesting Conversations Of course I learned just as much outside of the sessions as I learned in the sessions. I ended up eating lunch with Alexander Kukushkin who helped facilitate a 3 hour hands-on session today about Understanding and implementing PostgreSQL High Availability with Patroni and enjoyed hearing a bit more about PostgreSQL at Zalando. Talked with a few people from a government organization who were a long-time PostgreSQL shop and interested to hear more about Aurora PostgreSQL. Talked with a guy from a large financial and media company about flashback query, bloat and vacuum, pg_repack, parallel query and partitioning in PostgreSQL.

Of course I learned just as much outside of the sessions as I learned in the sessions. I ended up eating lunch with Alexander Kukushkin who helped facilitate a 3 hour hands-on session today about Understanding and implementing PostgreSQL High Availability with Patroni and enjoyed hearing a bit more about PostgreSQL at Zalando. Talked with a few people from a government organization who were a long-time PostgreSQL shop and interested to hear more about Aurora PostgreSQL. Talked with a guy from a large financial and media company about flashback query, bloat and vacuum, pg_repack, parallel query and partitioning in PostgreSQL.

And of course lots of discussions about the professional community. Met PostgresConf conference volunteers from California to South Africa and talked about how they got involved in the community. Saw Lloyd and chatted about the Seattle PostgreSQL User Group.

The training and summit days are wrapping up and now it’s time to get ready for the next three days: keynotes, breakout sessions, exposition, a career fair and more! I can’t wait. :)

Column And Table Redefinition With Minimal Locking

TLDR: Note to future self… (1) Read this before you modify a table on a live PostgreSQL database. If you do it wrong then your app might totally hang. There is a right way to do it which avoids that. (2) Especially remember the lock_timeout step. Many blog posts around the ‘net are missing this and it’s very important.

Yesterday I was talking to some PostgreSQL users (who, BTW, were doing rather large-scale cool stuff in PG) and they asked a question about making schema changes with minimal impact to the running application. They were specifically curious about changing a primary key from INT to BIGINT. (Oh, you are making all your new PK fields BIGINT right?)

And then, low and behold, I discovered a chat today on the very same topic. Seemed useful enough to file away on my blog so that I can find it later. BTW I got permission from Jim Nasby, Jim F and Robins Tharakan to blame them for this… ;)

Most useful part of the chat was how to think about doing table definition changes in PostgreSQL with minimal application impact due to locking:

- Use lock_timeout.

- Can be set at the session level.

- For changes that do more than just a quick metadata update, work with copies.

- Create a new column & drop old column instead of modifying.

- Or create a new table & drop old table.

- Use triggers to keep data in sync.

- Carefully leverage transactional DDL (PostgreSQL rocks here!) to make changes with no windows for missing data.

We can follow this line of thought even for a primary key – creating a unique index on the new column, using existing index to update table constraints, then dropping old column.

One of the important points here is making sure that operations which require locks are metadata-only. That is, they don’t need to actually modify any data (while holding said lock) for example rewriting or scanning the table. We want these ops to run very very fast, and even time out if they still can’t run fast enough.

A few minutes on google yields proof that Jim Nasby was right: lots of people have already written up some really good advice about this topic. Note that (as always) you should be careful about dates and versions in stuff you find yourself. Anything pre-2014 should be scrutinized very carefully (PostgreSQL has change a lot since then); and for the record, PostgreSQL 11 changes this specific list again (and none of these articles seem to be updated for pg11 yet). And should go without saying, but test test test…

- This article from BrainTree is my favorite of what I saw this morning. Concise yet clear list of green-light and red-light scenarios, with workaround for all the red lights.

- Add a new column, Drop a column, Add an index concurrently, Drop a constraint (for example, non-nullable), Add a default value to an existing column, Add an index, Change the type of a column, Add a column with a default, Add a column that is non-nullable, Add a column with a unique constraint, VACUUM FULL

- Citus has a practical tips article that’s linked pretty widely.

- adding a column with a default value, using lock timeouts, Create indexes, Taking aggressive locks, Adding a primary key, VACUUM FULL, ordering commands

- assembled a list in 2016 which is worth reviewing.

- Add a new column, Add a column with a default, Add a column that is non-nullable, Drop a column, Change the type of a column, Add a default value to an existing column, Add an index, Add a column with a unique constraint, Drop a constraint, VACUUM FULL, ALTER TABLE SET TABLESPACE

- Joshua Kehn put together a good article in late 2017 that especially illustrates the importance of using lock_timeout (though he doesn’t mention it in the article)

- Default values for new columns, Adding a default value on an existing column, Concurrent index creation, ALTER TABLE, importance of typical transaction length

For fun and posterity, here’s the original chat (which has a little more detail) where they gave me these silly ideas:

[11/08/18 09:01] Colleague1: I have a question with regard to APG. How can we make DDL modifications to a table with minimalistic locking (downtime)?

[11/08/18 09:31] Jim N: It depends on the modification you're trying to make. Many forms of ALTER TABLE are very fast. Some don't even require an exclusive lock.

[11/08/18 09:32] Jim N: What you have to be careful of are alters that will force a rewrite of the entire table. Common examples of that are adding a new column that has a default value, or altering the type of an existing column.

[11/08/18 09:33] Jim N: What I've done in the past for those scenarios is to create a new field (that's null), put a before insert or update trigger on the table to maintain that field.

[11/08/18 09:33] Jim N: Then run a "backfill" that processes a few hundred / thousand rows per transaction, with a delay between each batch.

[11/08/18 09:34] Jim N: Once I know that all rows in the table have been properly updated, drop the old row, and maybe mark the new row as NOT NULL.

[11/08/18 09:43] Jim N: btw, I know there's been a talk about this at a conference in the last year or two...

[11/08/18 09:49] Jim F: What happens at the page level if the default value of an ALTER TABLE ADD COLUMN is null? Once upon a time when I worked at [a commercialized fork of PostgreSQL], which was built on a version of PostgreSQL circa 2000, I recall that the table would be versioned. This was a pure metadata change, but the added columns would be created for older-version rows on read, and probably updated on write. Is that how it currently works?

[11/08/18 09:55] Jim N: Jim F in essence, yes.

[11/08/18 09:56] Jim N: Though I wouldn't describe it as being "versioned"

[11/08/18 09:57] Jim N: But because columns are always added to the end of the tuple (and we never delete from pg_attribute), heap_deform_tuple can detect if a tuple is "missing" columns at the end of the tuple and just treat them as being null.

[11/08/18 09:57] Jim N: At least I'm pretty sure that's what's going on, without actually re-reading the code right now. 😉

[11/08/18 10:08] Jim F: does it work that way for non-null defaults as well? that would create a need for versioning, if the defaults changed at different points in time

[11/08/18 10:08] Robins: While at that topic.... Postgres v11 now has the feature to do what Jim F was talking about (even for non-NULLs). Although as Jim Nasby said, you still need to be careful about which (other) kind of ALTERs force a rewrite and use the Trigger workaround. "Many other useful performance improvements, including the ability to avoid a table rewrite for ALTER TABLE ... ADD COLUMN with a non-null column default"

[11/08/18 10:08] Jim F: exactly...

Did we get anything wrong here? Do you disagree? Feel free to comment. :)

This Week in PostgreSQL – May 31

Since last October I’ve been periodically writing up summaries of interesting content I see on the internet related to PostgreSQL (generally blog posts). My original motivation was just to learn more about PostgreSQL – but I’ve started sharing them with a few colleagues and received positive feedback. Thought I’d try posting one of these digests here on the Ardent blog – who knows, maybe a few old readers will find it interesting? Here’s the update that I put together last week – let me know what you think!

Hello from California!

Part of my team is here in Palo Alto and I’m visiting for a few days this week. You know… for all the remote work I’ve done over the years, I still really value this in-person, face-to-face time. These little trips from Seattle to other locations where my teammates physically sit are important to me.

This is also part of the reason I enjoy user groups and conferences so much. They’re opportunities to meet with other PostgreSQL users in real life. In fact – at this very moment – one of the most important PostgreSQL conferences in the world is happening: PgCon! Having attended a few other conferences over the past year, I’m holding down the fort in the office this week in order to send a bunch of other teammates… but you can be sure I’m keeping an eye on twitter. :)

https://www.pgcon.org/2018/

https://twitter.com/search?l=&q=pgcon%20OR%20pgcon_org

=====

In the meantime, lets get busy with the latest updates from the postgresql virtual world. First of all, I think the biggest headline is that (just in time for pgcon) we have the first official beta version of PostgreSQL 11! The release announcement headlines with major partitioning enhancements, more parallelism, a feature to speed up SQL execution by compiling certain operations on-demand into native machine code (JIT/Just-In-Time compilation), and numerous SQL enhancements. You can also read the first draft of the release notes. This is the time to start testing and give feedback to the development community!

https://www.postgresql.org/about/news/1855/

https://www.postgresql.org/docs/devel/static/release-11.html

Closely related to this, there’s one other really big headline that I’m excited about: the new AWS RDS Preview Environment. You can now try out the new pg11 features ahead of time with a single click! In part because the development community is so awesome, the first database available in the RDS Preview Environment is PostgreSQL. And the official PostgreSQL 11 beta release is _already_ available on RDS!! Personally I’m hoping that this benefits the community by getting more people to test and give feedback on new features being built for PostgreSQL 11. I hope it will make a great database even better.

https://aws.amazon.com/rds/databasepreview/

https://forums.aws.amazon.com/ann.jspa?annID=5788 (pg11 beta announcement)

Outside of the RDS and PG11-related stuff, I saw one other headline that I thought might be worth mentioning. On May 29, IBM published a blog post that caught my attention, featuring EnterpriseDB as an IBM partner on their Private Cloud deployments. You might not realize just how much PostgreSQL is being used and sold by IBM… but there’s Compose, ElephantSQL, and now EDB in the mix.

https://www.ibm.com/blogs/think/2018/05/next-enterprise-platform/

Part of the reason I took note of this was that I remember just last November when HPE ran a similar announcement, partnering with EDB on their on-premise subscription-based GreenLake platform.

https://news.hpe.com/hpe-expands-pay-per-use-it-to-key-business-workloads/

So it seems to me that EDB is doing some nice work at building up the PostgreSQL presence in the enterprise world – which I’m very happy to see. To be clear, this isn’t necessarily new… does anyone remember vPostgres?

Nonetheless, it feels to me like the ball is moving forward. It feels like PostgreSQL maturity and adoption are continually progressing at a very healthy pace.

=====

Moving on from headlines, lets get to the real stuff – the meaty technical articles. :)

First up, who likes testing and benchmarking? One of my favorite activities, truth be told! So I can’t quite convey just how excited I am about the preview release of Kevin Closson’s pgio testing kit. For those unfamiliar, Kevin has spent years refining his approach for testing storage through database I/O paths. Much work was done in the past with Oracle databases, and he calls his method SLOB. I’m excited to start using this kit for exploring the limits of storage through PostgreSQL I/O paths too.

Right after Kevin published that post, Franck Pachot followed up with a short article using pgio to look at the impact of the ext4 “sync” filesystem option (made relevant by the recently disclosed flaws in how PostgreSQL has been interacting with Linux’s implementation of fsync).

https://blog.dbi-services.com/postgres-the-fsync-issue-and-pgio-the-slob-method-for-postgresql/

In addition to Kevin’s release of PGIO, I also saw three other generally fun technical articles. First, Kaarel Moppel from Cybertec published an article showing much lower-than-expected impact of pl/pgsql triggers on a simple pgbench execution. Admittedly, I want to poke around at this myself, having seen a few situations myself where the impact seemed higher. Great article – and it certainly got some circulation on twitter.

https://www.cybertec-postgresql.com/en/are-triggers-really-that-slow-in-postgres/

Next, Sebastian Insausti has published an article explaining PostgreSQL streaming replication. What I appreciate the most about this article is how Sebastian walks through the history of how streaming replication was developed. That context is so important and helpful!

https://severalnines.com/blog/postgresql-streaming-replication-deep-dive

Finally, the requisite Vacuum post. :) This month we’ve got a nice technical article from Sourabh Ghorpade on the Gojek engineering team. Great high-level introduction to vacuuming in general, and a good story about how their team narrowly averted an “xid wraparound” crisis.

https://blog.gojekengineering.com/postgres-autovacuum-tuning-394bb99fe2c0

=====

We’ve been following Dimitri Fontaine’s series on PostgreSQL data types. Well sadly (but inevitably) he has brought the series to a close. On May 24, Dimitri published a great summary of the whole data type series – this definitely deserves to be on every developer’s short list of PostgreSQL bookmarks!

https://tapoueh.org/blog/2018/05/postgresql-data-types/

But while we’re talking about data types, there were two more related articles worth pointing out this time around. First, Berend Tober from SeveralNines published a nice article back on the 9th about the serial data type in PostgreSQL. This is an integer type that automatically comes with not-null constraints and auto-assignment from a sequence.

https://severalnines.com/blog/overview-serial-pseudo-datatype-postgresql

Secondly, Hans-Jürgen Schönig from Cybertec gives a nice overview of mapping data types from Oracle to PosgreSQL. He has a little paragraph in there about mapping Oracle numbers to PostgreSQL integers and numerics. That little paragraph probably deserves triple-bold-emphesis. Automatic mapping of every number column to PostgreSQL numeric has been cause for many, many performance woes in PostgreSQL databases!

https://www.cybertec-postgresql.com/en/mapping-oracle-datatypes-to-postgresql/

=====

For something that might be relevant to both developers and DBAs, I have a couple articles about SQL tuning. First, Brian Fehrle has written a great tuning introduction for the severalnines blog. His starting point is pg_stat_activity and the explain SQL command; exactly the same as my starting point. :)

https://severalnines.com/blog/why-postgresql-running-slow-tips-tricks-get-source

Next up, Louise Grandjonc from France has published a series of articles called “understanding EXPLAIN“. Did you ever wonder why there are _two_ numbers reported for execution time of each step? Louise answers this question and many more in the these four articles!

http://www.louisemeta.com/blog/explain/

http://www.louisemeta.com/blog/explain-2/

http://www.louisemeta.com/blog/explain-3/

http://www.louisemeta.com/blog/explain-4/

=====

Moving down the stack a little more, there were two articles about monitoring that seem worth passing along. Datadog has put out a lot of articles about monitoring recently. I hadn’t mentioned it before, but Emily Chang gave us yet another one back on April 12. As usual, I’m impressed with the level of quality in this thorough article which is specifically focused on PostgreSQL on RDS. I especially appreciated the key metrics, things I’ve used myself to characterize workloads.

https://www.datadoghq.com/blog/aws-rds-postgresql-monitoring/

Earlier I mentioned the severalnines blog post about replication – and the pgDash team published a nice article on May 2 about monitoring replication. The give another nice general architectural overview of replication as well.

https://pgdash.io/blog/monitoring-postgres-replication.html

=====

In my last update, I closed with a few articles about pgpool on the severalnines blog. It seemed worth mentioning that they have published a third, final article for their series.

https://severalnines.com/blog/guide-pgpool-part-three-hints-observations

Also, I spotted an article about pgpool on the AWS database blog too. While focused on Aurora PostgreSQL, there’s plenty to be learned about using pgpool with regular PostgreSQL here.

Along those lines, most people know about the other popular PostgreSQL connection pooler, pgbouncer. This is the connection pooler which is used by Gulcin Yildirim’s near-zero-downtime ansible-based upgrade tool, pglupgrade. He’s written a few articles about his process recently, and being a big ansible fan I’ve been following along.

https://blog.2ndquadrant.com/near-zero-downtime-automated-upgrades-postgresql-clusters-cloud/

https://blog.2ndquadrant.com/near-zero-downtime-automated-upgrades-postgresql-clusters-cloud-part-ii/

But I wonder if the landscape is about to change? Yesterday the Yandex team announced that they have built and released a new load balancer to address limitations in pgpool and pgbouncer. I’ll be very interested to see what happens with odyssey!

https://www.postgresql.org/message-id/C9D1137E-F2A7-4307-B758-E5ED5559FFCA@simply.name (announcement)

https://github.com/yandex/odyssey

And that wraps things up for this edition.

Have a great week and keep learning!

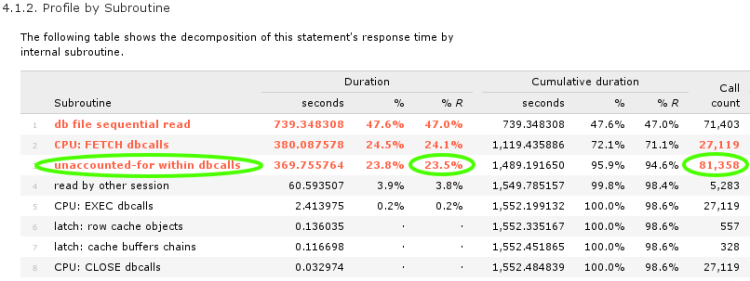

Understanding CPU on AIX Power SMT Systems

This month I worked with a chicagoland company to improve performance for eBusiness Suite on AIX. I’ve worked with databases running on AIX a number of times over the years now. Nevertheless, I got thrown for a loop this week.

TLDR: In the end, it came down to a fundamental change in resource accounting that IBM introduced with the POWER7 processor in 2010. The bottom line is twofold:

- if SMT is enabled then the meaning of CPU utilization numbers is changed. the CPU utilization numbers for individual processes mean something completely new.

- oracle database 11.2.0.3 (I haven’t tested newer versions but they might also be affected) is not aware of this change. as a result, all CPU time values captured in AWR reports and extended SQL traces are wrong and misleading if it’s running on AIX/POWER7/SMT. (I haven’t tested CPU time values at other places in the database but they might also be wrong.)

On other unix operating systems (for example Linux with Intel Hyper-Threading), the CPU numbers for an individual process reflect the time that the process spent on the CPU. It’s pretty straightforward: 100% means that the process is spending 100% of its time on the logical CPU (a.k.a. thread – each hardware thread context on a hyper-threaded core appears as a CPU in Linux). However AIX with SMT is different. On AIX, when you look at an individual process, the CPU utilization numbers reflect IBM’s opinion about what percentage of physical capacity is being used.

Why did IBM do this? I think that their overall goal was to help us in system-wide monitoring and capacity planning – however it came at the expense of tuning individual processes. They are trying to address real shortcomings inherent to SMT – but as someone who does a lot of performance optimization, I find that their changes made my life a lot more difficult!

HistoryLs Cheng started a conversation in November 2012 on the Oracle-L mailing list about his database on AIX with SMT enabled, where the CPU numbers in the AWR report didn’t even come close to adding up correctly. Jonathan Lewis argued that double-counting was the simplest explanation while Karl Arao made the case for time in the CPU run queue. A final resolution as never posted to the list – but in hindsight it was almost certainly the same problem I’m investigating in this article. It fooled all of us. CPU intensive Oracle workloads on AIX/Power7/SMT most frequently misleads performance experts into thinking there is a CPU runqueue problem at the OS level. In fact, after researching for this article I went back and looked at my own final report from a consulting engagement with an AIX/SMT client back in August 2011 and realized that I made this mistake myself!

As far as I’m aware, Marcin Przepiorowski was the first person to really “crack” the case when and he researched and published a detailed explanation back in February 2013 with his article Oracle on AIX – where’s my cpu time?. Marcin was tipped off by Steve Pittman’s detailed explanation published in a December 2012 article Understanding Processor Utilization on Power Systems – AIX. Karl Arao was also researching it back in 2013 and published a lot of information on his tricky cpu aix stuff tiddlywiki page. Finally, Graham Wood was digging into it at the same time and contributed to several conversations amongst oak table members. Just to be clear that I’m not posting any kind of new discovery! :)

However – despite the fact that it’s been in the public for a few years – most people don’t understand just how significant this is, or even understand exactly what the problem is in technical terms. So this is where I think I can make a contribution: by giving a few simple demonstrations of the behavior which Steve, Marcin and Karl have documented.

CPU and MultitaskingI recently spent a few years leading datbase operations for a cloud/SaaS company. Perhaps one of the most striking aspects of this job was that I had crossed over… from being one of the “young guys” to being one of the “old guys”! I certainly wasn’t the oldest guy at the company but more than half my co-workers were younger than me!

Well my generation might be the last one to remember owning personal computers that didn’t multitask. Ok… I know that I’m still working alongside plenty of folks who learned to program on punch-cards. But at the other end of the spectrum, I think that DOS was already obsolete when many of my younger coworkers starting using technology! Some of you younger devs started with Windows 95. You’ve always had computers that could run two programs in different windows at the same time.

Sometimes you take a little more notice of tech advancements you personally experience and appreciate. I remember it being a big deal when my family got our first computer that could do more than one thing at a time! Multitasking (or time sharing) is not a complicated concept. But it’s important and foundational.

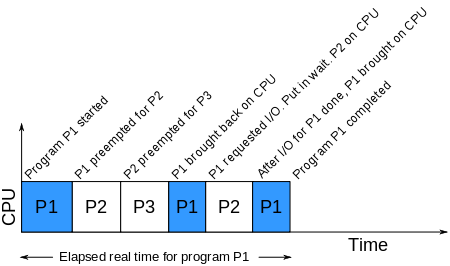

So obviously (I hope), if there are multiple processes and only a single CPU then the processes will take turns running. There are some nuances around if, when and how the operating system might force a process to get off the CPU but the most important thing to understand is just the timeline pictured above. Because for the rest of this blog post we will be talking about performance and time.

Here is a concrete example of the illustration above: one core in my laptop CPU can copy 13GB of data through memory in about 4-5 seconds:

$ time -p taskset 2 dd if=/dev/zero of=/dev/null bs=64k count=200k

204800+0 records in

204800+0 records out

13421772800 bytes (13 GB) copied, 4.73811 s, 2.8 GB/s

real 4.74

user 0.13

sys 4.54

The “taskset” command on linux pins a command on a specific CPU #2 – so “dd” is only allowed to execute on that CPU. This way, my example runs exactly like the illustration above, with just a single CPU.

What happens if we run two jobs at the same time on that CPU?

$ time -p taskset 2 dd if=/dev/zero of=/dev/null bs=64k count=200k &

[1] 18740

$ time -p taskset 2 dd if=/dev/zero of=/dev/null bs=64k count=200k &

[2] 18742

204800+0 records in

204800+0 records out

13421772800 bytes (13 GB) copied, 9.25034 s, 1.5 GB/s

real 9.25

user 0.09

sys 4.57

204800+0 records in

204800+0 records out

13421772800 bytes (13 GB) copied, 9.22493 s, 1.5 GB/s

real 9.24

user 0.12

sys 4.54

[1]- Done time -p taskset 2 dd if=/dev/zero of=/dev/null bs=64k count=200k

[2]+ Done time -p taskset 2 dd if=/dev/zero of=/dev/null bs=64k count=200k

Naturally, it takes twice as long – 9-10 seconds. I ran these commands on my linux laptop but the same results could be observed on any platform. By the way, notice that the “sys” number was still 4-5 seconds. This means that each process was actually executing on the CPU for 4-5 seconds even though it took 9-10 seconds of wall clock time.

The “time” command above provides a summary of how much real (wall-clock) time has elapsed and how much time the process was executing on the CPU in both user and system modes. This time is tracked and accounted for by the operating system kernel. The linux time() command uses the wait4() system call to retrieve the CPU accounting information. This can be verified with strace:

$ strace -t time -p dd if=/dev/zero of=/dev/null bs=64k count=200k

10:07:06 execve("/usr/bin/time", ["time", "-p", "dd", "if=/dev/zero", "of=/dev/null", \

"bs=64k", "count=200k"], [/* 48 vars */]) = 0

...

10:07:06 clone(child_stack=0, flags=CLONE_CHILD_CLEARTID|CLONE_CHILD_SETTID|SIGCHLD, \

child_tidptr=0x7f8f841589d0) = 12851

10:07:06 rt_sigaction(SIGINT, {SIG_IGN, [INT], SA_RESTORER|SA_RESTART, 0x7f8f83be90e0}, \

{SIG_DFL, [], 0}, 8) = 0

10:07:06 rt_sigaction(SIGQUIT, {SIG_IGN, [QUIT], SA_RESTORER|SA_RESTART, 0x7f8f83be90e0}, \

{SIG_IGN, [], 0}, 8) = 0

10:07:06 wait4(-1,

204800+0 records in

204800+0 records out

13421772800 bytes (13 GB) copied, 4.66168 s, 2.9 GB/s

[{WIFEXITED(s) && WEXITSTATUS(s) == 0}], 0, {ru_utime={0, 108000}, \

ru_stime={4, 524000}, ...}) = 12851

10:07:11 --- SIGCHLD {si_signo=SIGCHLD, si_code=CLD_EXITED, si_pid=12851, si_uid=1000, \

si_status=0, si_utime=10, si_stime=454} ---

10:07:11 rt_sigaction(SIGINT, {SIG_DFL, [INT], SA_RESTORER|SA_RESTART, 0x7f8f83be90e0}, \

{SIG_IGN, [INT], SA_RESTORER|SA_RESTART, 0x7f8f83be90e0}, 8) = 0

10:07:11 rt_sigaction(SIGQUIT, {SIG_IGN, [QUIT], SA_RESTORER|SA_RESTART, 0x7f8f83be90e0}, \

{SIG_IGN, [QUIT], SA_RESTORER|SA_RESTART, 0x7f8f83be90e0}, 8) = 0

10:07:11 write(2, "r", 1r) = 1

10:07:11 ...

Two notes about this. First, you’ll see from the timestamps that there’s a 5 second pause during the wait4() syscall and the output from “dd” interrupts its output. Clearly this is when “dd” is running. Second, you’ll see that the wait4() call is returning two variables called ru_utime and ru_stime. The man page on wait4() clarifies that this return parameter is the rusage struct which is defined in the POSIX spec. The structure is defined in time.h and is the same structure returned by getrusage() and times(). This is how the operating system kernel returns the timing information to “time” for display on the output.

CPU Utilization on Linux with Intel SMT (Hyper-Threading)Since many people are familiar with Linux, it will be helpful to provide a side-by-side comparison of Linux/Intel/Hyper-Threading with AIX/Power7/SMT. This will also help clarify exactly what AIX is doing that’s so unusual.

For this comparison, we will switch to Amos Waterland’s useful stress utility for CPU load generation. This program is readily available for all major unix flavors and cleanly loads a CPU by spinning on the sqrt() function. I found a copy at perzl.org already ported and packaged for AIX on POWER.

For our comparison, we will load a single idle CPU for 100 seconds of wall-clock time. We know that the process will spin on the CPU for all 100 seconds, but lets see how the operating system kernel reports it.

First, lets verify that we have SMT (Hyper-Threading):

user@debian:~$ lscpu | egrep '(per|name)'

Thread(s) per core: 2

Core(s) per socket: 2

Model name: Intel(R) Core(TM) i3-4005U CPU @ 1.70GHz

Next lets run our stress test (pinned to a single CPU) and see what the kernel reports for CPU usage:

user@debian:~$ time -p taskset 2 stress -c 1 -t 100

stress: info: [20875] dispatching hogs: 1 cpu, 0 io, 0 vm, 0 hdd

stress: info: [20875] successful run completed in 100s

real 100.00

user 100.03

sys 0.00

Just what we would expect – the system is idle, and the process was on the CPU for all 100 seconds.

Now lets use mpstat to look at the utilization of CPU #2 in a second window:

user@debian:~$ mpstat -P 1 10 12

Linux 3.16.0-4-amd64 (debian) 06/30/2016 _x86_64_ (4 CPU)

01:58:07 AM CPU %usr %nice %sys %iowait %irq %soft %steal %guest %gnice %idle

01:58:17 AM 1 0.00 0.00 0.10 0.00 0.00 0.00 0.00 0.00 0.00 99.90

01:58:27 AM 1 17.44 0.00 0.10 0.00 0.00 0.00 0.00 0.00 0.00 82.45

01:58:37 AM 1 100.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00

01:58:47 AM 1 100.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00

01:58:57 AM 1 100.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00

01:59:07 AM 1 100.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00

01:59:17 AM 1 100.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00

01:59:27 AM 1 100.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00

01:59:37 AM 1 100.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00

01:59:47 AM 1 100.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00

01:59:57 AM 1 100.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00 0.00

02:00:07 AM 1 82.88 0.00 0.10 0.00 0.00 0.00 0.00 0.00 0.00 17.02

Average: 1 83.52 0.00 0.03 0.00 0.00 0.00 0.00 0.00 0.00 16.45

Again, no surprises here. We see that the CPU was running at 100% for the duration of our stress test.

Next lets check the system-wide view. On linux, most people use with the top command when they want to see what’s happening system-wide. Top shows a list of processes and for each process it estimates how much time is spent on the CPU. top uses the same kernel-tracked POSIX timing data that the time command returns. It then divides by the wall-clock time to express that timing data as a percentage. If two processes are running on one CPU, then each process will report 50% CPU utilization.

We will run top in a third window while the stress and mpstat programs are running to get the system-wide view:

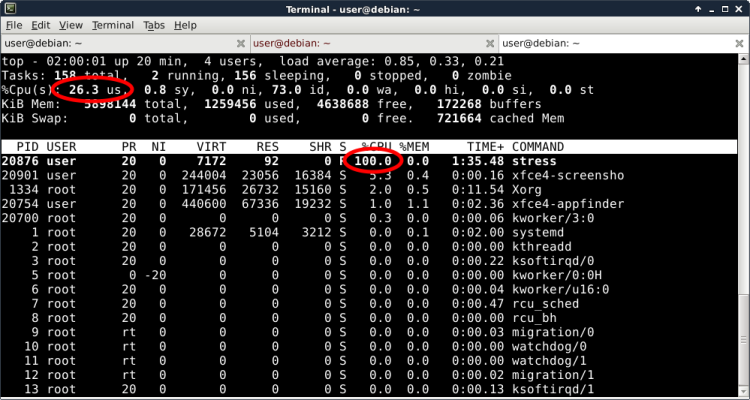

Linux top (in Irix mode) reports that the “stress” program is using 100% of a single CPU and that 26.3% of my total CPU capacity is used by the system.

This is wrong. Did you spot it? If you have any linux servers with hyper-threading enabled then I really hope you understand the problem with this!

The problem is with the second statement – that 26% of my total CPU capacity is used. In reality, a “hardware thread” is nothing like a “real core”. (For more details about Hyper-Threading and CPU Capacity, Karl Arao might be one of the best sources of information.) Linux kernel developers represent each hardware thread as a logical CPU. As a result (and this is counter-intuitive) it’s very misleading to look at that “total CPU utilization” number as something related to total CPU capacity.

What does this mean for you? You must set your CPU monitoring thresholds on Linux/Hyper-Threading very low. You might consider setting critical threshold at 70%. Personally, I like to keep utilization on transactional systems under 50%. If your hyper-threaded linux system seems to have 70% CPU utilization, then in reality you be almost out of CPU capacity!

Why is this important? This is exactly the problem that IBM’s AIX team aimed to solve with SMT on POWER. But there is a catch: the source data used by standard tools to calculate system-level CPU usage is the POSIX-defined “rusage” process accounting information. IBM tweaked the meaning of rusage to fix our system-level CPU reporting problem – and they introduced a new problem at the individual process level. Lets take a look.

CPU Utilization on AIX with Power SMTFirst, as we did on Linux, lets verify that we have SMT (Hyper-Threading):

# prtconf|grep Processor

Processor Type: PowerPC_POWER7

Processor Implementation Mode: POWER 7

Processor Version: PV_7_Compat

Number Of Processors: 4

Processor Clock Speed: 3000 MHz

Model Implementation: Multiple Processor, PCI bus

+ proc0 Processor

+ proc4 Processor

+ proc8 Processor

+ proc12 Processor

# lparstat -i|egrep '(Type|Capacity )'

Type : Shared-SMT-4

Entitled Capacity : 2.00

Minimum Capacity : 2.00

Maximum Capacity : 4.00

So you can see that we’re working with 2 to 4 POWER7 processors in SMT4 mode, which will appear as 8 to 16 logical processors.

Now lets run the exact same stress test, again pinned to a single CPU.

# ps -o THREAD

USER PID PPID TID ST CP PRI SC WCHAN F TT BND COMMAND

jschneid 13238466 28704946 - A 0 60 1 - 240001 pts/0 - -ksh

jschneid 9044322 13238466 - A 3 61 1 - 200001 pts/0 - ps -o THREAD

# bindprocessor 13238466 4

# /usr/bin/time -p ./stress -c 1 -t 100

stress: info: [19398818] dispatching hogs: 1 cpu, 0 io, 0 vm, 0 hdd

stress: info: [19398818] successful run completed in 100s

Real 100.00

User 65.01

System 0.00

Wait… where did my CPU time go?! (This is one of the first things Marcin noticed too!) The AIX kernel reported that my process ran for 100 seconds of wall-clock time, but it was only running on the CPU for 65 seconds of that time!

On unix flavors such as Linux, this means that the operating system was not trying to put the process on the CPU during the missing time. Maybe the process was waiting for a disk operation or a signal from another process. But our stress test only executes the sqrt() function – so we know that it was not waiting for anything.

When you know the process was not waiting, there is only other reason the operating system wouldn’t put the process on the CPU. Look again at our very first demo in this article: two (or more) processes needed to share the CPU. And notice that the user+system time was lower than wall-clock time, exactly like our output here on AIX!

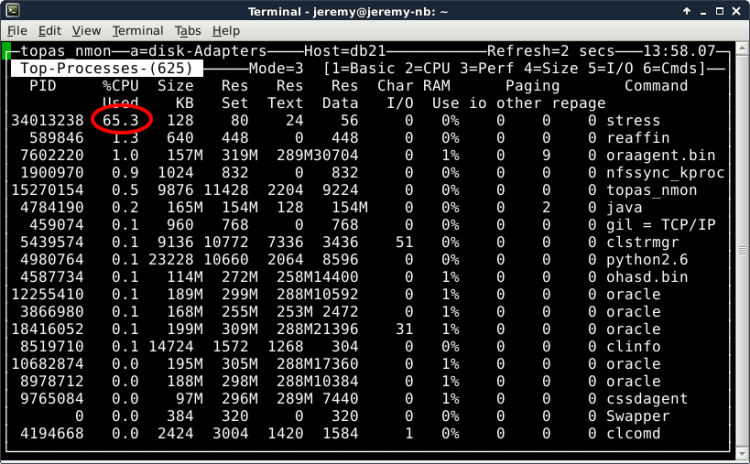

So lets take a look at the system-wide view with the “nmon” utility in a second window. (topas reports CPU usage solaris-style while nmon reports irix-style, so nmon will be more suitable for this test. they are actually the same binary anyway, just invoked differently.)

Wait… this doesn’t seem right! Our “stress” process is the only process running on the system, and we know that it is just spinning CPU with the sqrt() call. The “nmon” tool seems to verify the output of the time command – that the process is only on the CPU for 65% of the time! Why isn’t AIX letting my process run on the CPU?!

Lets take a look at the output of the mpstat command, which we are running in our third window:

# mpstat 10 12|egrep '(cpu|^ 4)'

System configuration: lcpu=16 ent=2.0 mode=Uncapped

cpu min maj mpc int cs ics rq mig lpa sysc us sy wa id pc %ec lcs

4 0 0 0 2 0 0 0 1 100 0 0 49 0 51 0.00 0.0 1

4 19 0 40 143 7 7 1 1 100 19 100 0 0 0 0.61 30.7 7

4 0 0 0 117 2 2 1 1 100 0 100 0 0 0 0.65 32.6 4

4 0 0 0 99 1 1 1 1 100 0 100 0 0 0 0.65 32.6 3

4 0 0 0 107 3 3 1 3 100 0 100 0 0 0 0.65 32.6 6

4 0 0 0 145 5 5 1 3 100 0 100 0 0 0 0.65 32.6 9

4 0 0 0 113 2 2 1 1 100 0 100 0 0 0 0.65 32.6 3

4 0 0 0 115 1 1 1 1 100 0 100 0 0 0 0.65 32.6 7

4 0 0 0 106 1 1 1 1 100 0 100 0 0 0 0.65 32.6 2

4 0 0 0 113 1 1 1 1 100 0 100 0 0 0 0.65 32.6 5

4 0 0 41 152 2 2 1 1 100 0 100 0 0 0 0.65 32.6 3

4 5 0 0 6 0 0 0 1 100 4 100 0 0 0 0.04 1.8 1

Processor 4 is running at 100%. Right away you should realize something is wrong with how we are interpreting the nmon output – because our “stress” process is the only thing running on this processor. The mpstat utility is not using the kernel’s rusage process accounting data and it shows that our process is running on the CPU for the full time.

So… what in the world did IBM do? The answer – which Steve and Marcin published a few years ago – starts with the little mpstat column called “pc”. This stands for “physical consumption”. (It’s called “physc” in sar -P output and in topas/nmon.) This leads us to the heart of IBM’s solution to the system-wide CPU reporting problem.

IBM is thinking about everything in terms of capacity rather than time. The pc number is a fraction that scales down utilization numbers to reflect utilization of the core (physical cpu) rather than the hardware thread (logical cpu). And in doing this, they don’t just divide by four on an SMT-4 chip. The fraction is dynamically computed by the POWER processor hardware in real time and exposed through a new register called PURR. IBM did a lot of testing and then – starting with POWER7 – they built the intelligence in to the POWER processor hardware.

In our example, we are using one SMT hardware thread at 100% in SMT-4 mode. The POWER processor reports through the PURR register that this represents 65% of the processor’s capacity, exposed to us through the pc scale-down factor of 0.65 in mpstat. My POWER7 processor claims it is only 65% busy when one if its four threads is running at 100%.

I also ran the test using two SMT hardware threads at 100% on the same processor in SMT-4 mode. The processor scaled both threads down to 45% so that when you add them together, the processor is claiming that it’s 90% busy – though nmon & topas will show each of the two processes running at only 45% of a CPU! When all four threads are being used at 100% in SMT-4 mode then of course the processor will scale all four processes down to 25% – and the processor will finally show that it is 100% busy.

On a side note, the %ec column is showing the physical consumption as a percentage of entitled capacity (2 processors). My supposed 65% utilization of a processor equates to 32.6% of my system-wide entitled capacity. Not coincidentally, topas shows the “stress” process running at 32.6% (like I said, solaris-style).

On a side note, the %ec column is showing the physical consumption as a percentage of entitled capacity (2 processors). My supposed 65% utilization of a processor equates to 32.6% of my system-wide entitled capacity. Not coincidentally, topas shows the “stress” process running at 32.6% (like I said, solaris-style).

So AIX is factoring in the PURR ratio when it populates the POSIX rusage process accounting structure. What is the benefit? Topas and other monitoring tools calculate system load by adding up the processor and/or process utilization numbers. By changing the meaning from time to capacity at such a low level, it helps us to very easily get an accurate view of total system utilization – taking into account the real life performance characteristics of SMT.

The big win for us is that on AIX, we can use our normal paging thresholds and we have better visibility into how utilized our system is.

The Big Problem With AIX/POWER7/SMT CPU Accounting ChangesBut there is also a big problem. Even if it’s not a formal standard, it has been a widely accepted convention for decades that the POSIX rusage process accounting numbers represent time. Even on AIX with POWER7/SMT, the “time” command baked into both ksh and bash still uses the old default output format:

# time ./stress -c 1 -t 66

stress: info: [34537674] dispatching hogs: 1 cpu, 0 io, 0 vm, 0 hdd

stress: info: [34537674] successful run completed in 66s

real 1m6.00s

user 0m41.14s

sys 0m0.00s